#356: Tesla’s Investor Day Offered No Flash, All Substance, & More

1. Tesla’s Investor Day Offered No Flash, All Substance

Despite mixed reviews of Tesla’s Investor Day last week, ARK’s Tasha Keeney and Sam Korus found no shortage of important and exciting news. While many investors expected a flashy glimpse of its next generation vehicle, we believe Tesla shared news more profound than a product prototype: the roadmap for continuous cost declines associated with scaling production.

In our view, Tesla is likely to deliver on interdependent actions that should reduce vehicle costs by ~50% during the next five years. First, it will produce 100% of the controllers on its next generation vehicle. Second, it will switch to a 48-volt battery architecture that should reduce power losses by 16-fold. Third, it will use local ethernet-connected controllers to reduce the complexity of the wiring harness. These electrical architecture changes should cut costs and give Tesla more control over its supply chain at the component level. They also will enable Tesla to transition its manufacturing to a parallel assembly process, slashing its manufacturing footprint and wasted time by 40% and 30%, respectively.

By reducing its factory footprint, Tesla will be able to accelerate Gigafactory production, increasing the scaling velocity of both its fleet and its data engine. Tesla’s fleet currently drives more than 120 million miles per day in total and ~100 million in full self-driving (FSD) with its most advanced driver assistance. In contrast, Cruise and Waymo each has attained one million miles driven cumulatively with no one behind the wheel on public roads. While not a perfect comparison, relative to its autonomous driving competition, Tesla vehicles have traveled ~100X the cumulative miles and have collected ~50,000X the data. According to our research, data will be critical in the race to create and scale a fully autonomous taxi service.

In short, Tesla’s vertical integration seems to have given the company an edge that may take its less-integrated competitors years—if ever—to replicate. Don’t be fooled by tepid reviews.

2. AlphaFold’s Capabilities Activate Cyclic Peptides

Accurately predicting the 3D structures of proteins, the AlphaFold deep learning model has taken life sciences by storm. Though imperfect, AlphaFold’s predictions are helping scientists generate and test hypotheses much more quickly and economically than historically has been the case. Since AlphaFold open-sourced in 2021, several research groups have built competitive models, RoseTTAFold included, or built new models on top of AlphaFold. Earlier this week, researchers modified AlphaFold’s scope to include cyclic peptides, a class of proteins with significant therapeutic potential.

Protein-based medicines can deliver positive benefits but face several obstacles. First, because they degrade quickly and can escape the digestive system, the drugs are not available orally. Second, because of their size and charged nature, they struggle to permeate cell membranes. That said, protein-based drugs are less toxic and often are highly specific relative to their targets.

Cyclic peptides, linear proteins stitched into a circle, are more rigid and less likely to degrade than most protein-based medicines, though they still struggle to permeate cells. Because few experimentally validated structures exist for cyclic peptides, AlphaFold had not predicted them very well, until last week.

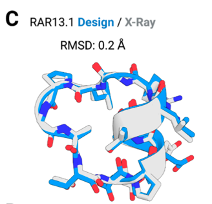

In a game-changing move, researchers used a novel positional encoding framework–called a “cyclic offset”–to help AlphaFold understand that the predicted structures are circular, not linear. Adding cyclic peptide training data, they improved AlphaFold’s ability to predict cyclic peptide structures, as shown below: while the gray backbone represents the structure determined structurally, the blue backbone represents Alphafold’s prediction.

Source: Rettie, S. A. et al. 2023. “Cyclic Peptide Structure Prediction And Design Using AlphaFold.” bioRxiv preprint. https://www.biorxiv.org/content/10.1101/2023.02.25.529956v1.full.pdf

Many of the cyclic peptide study authors have published on the issue of cell permeability. According to some of them, AfCycDesign should lower the barriers to generating cyclic peptide molecules with drug-like properties. Apparently, Vilya, a therapeutics company that recently raised $50 million in a Series A round led by ARCH Venture Partners, houses much of this intellectual property.

3. OpenAI Releases New APIs And Changes Terms Of Service

Last week, OpenAI announced a slate of developments that should galvanize further innovation.

First, the technology now allows third-party developers to integrate ChatGPT into their apps and services via a new API much less expensive than existing language models. According to OpenAI, the ChatGPT API can do more than create an AI-powered chat interface. Its new model family, gpt-3.5-turbo, is the “best model for many non-chat use cases.” OpenAI is offering 1,000 tokens for $0.002, 10x cheaper than GPT-3.5 models delivered only three months ago.

Second, OpenAI is making its AI-powered speech-to-text model, Whisper, available for use through a new API.

Third, OpenAI announced policy changes based on developer feedback. Importantly, the service will use data submitted through the API to train its models only if customers opt-in.

Finally, OpenAI is improving its uptime, its engineering team’s top priority to stabilize production use cases.

Actively Managed Equity

Actively Managed Equity Overview: All Strategies

Overview: All Strategies Investor Resources

Investor Resources Indexed Equity

Indexed Equity Private Equity

Private Equity Digital Assets

Digital Assets Invest In The Future Today

Invest In The Future Today

Take Advantage Of Market Inefficiencies

Take Advantage Of Market Inefficiencies

Make The World A Better Place

Make The World A Better Place

Articles

Articles Podcasts

Podcasts White Papers

White Papers Newsletters

Newsletters Videos

Videos Big Ideas 2024

Big Ideas 2024