Data has always been critical to business decision making. Companies have devoted significant resources to expand into the digital universe, collecting, structuring, and analyzing data such as customer information, purchase orders, inventory tracking, and transactional records.

As businesses have digitized operations, the amount of data has grown faster than their ability to process and organize it. They desperately need tools to capture the digital breadcrumbs that customers leave behind.

Behavior that once happened in-store or over-the-phone is moving on-line, resulting in orders of magnitude more data. Businesses can answer many more questions about their customers. How long do customers browse before making a selection? Is there a stage in the transaction process that takes abnormally long? What items do customers buy together? Are searches for additional items going unfulfilled?

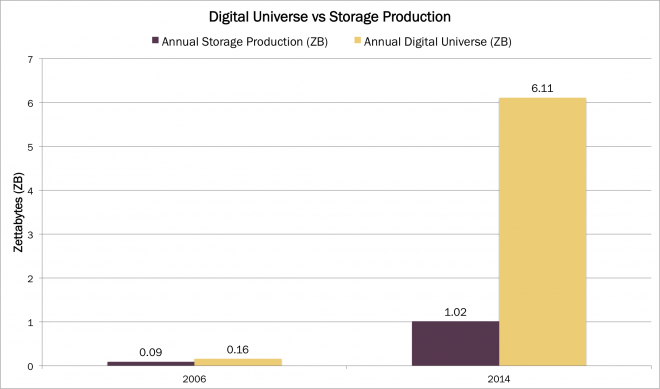

These digital breadcrumbs are valuable, but storing and analyzing them properly is challenging. Historically, the digital universe, or the amount of data created, replicated and consumed each year, closely tracked digital storage capacity. For example, in 2006 the digital universe was 1.7 times greater than annual storage production.[1] Now, it outstrips capacity by roughly 500%, as shown below.

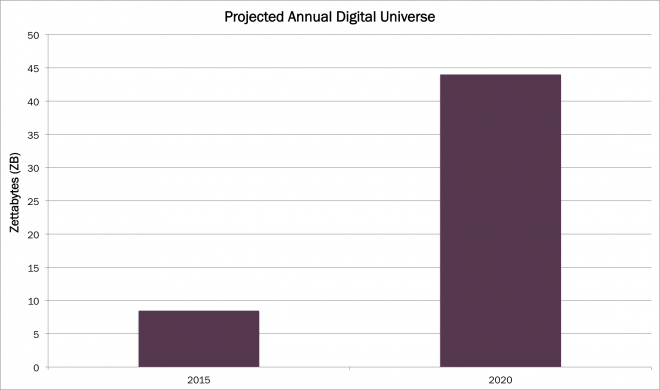

According to the IDC and EMC’s digital universe study, the growth of the digital universe is accelerating. As shown below, it grew by 25% at a compound annual rate from 2006 to 2014, but is projected to grow 39% annually over the next five years, increasing from 8.5 zettabytes (ZB)[2] at the end of 2015 to 44 ZB by 2020.

Businesses are inundated with more data than they can handle, and need new tools to incorporate insights. Big data analytics and visualization companies provide solutions for the volume and variety of data being produced. Companies like New Relic [NEWR], Splunk [SPLK], Tableau [DATA], Qlik [QLIK], Hortonworks [HDP], and Cloudera provide solutions for companies trying to transform rivers of data into useful information.

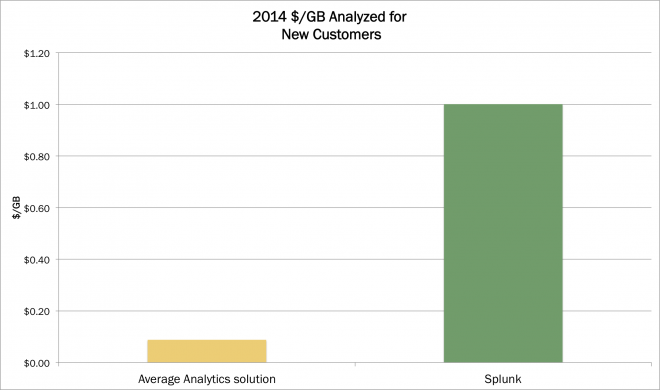

From a $7B market in 2014, data analytics is poised to scale multiples higher. Of the 6.1 ZB of data generated in 2014, 22% contained valuable information but only 1% was analyzed, according to IDC and EMC, suggesting a cost of roughly $.10 per GB analyzed.[3][4] Though prices are declining, if all valuable data were analyzed at $0.10/GB, the total addressable analytics market would measure in the hundreds of billions of dollars.

The total addressable market will depend on how fast the cost/GB analyzed declines. Companies with truly innovative and useful data platforms will have an advantage because superior technology creates a pricing moat. For example, Splunk, which specializes in capturing disparate data streams and rendering them comparable, has held pricing power well above the average cost/GB. By our calculations it generated $1.00 per GB analyzed in 2014. Interestingly, even at this price point Splunk is taking share, suggesting the high value-add of its analytics.

Clearly Splunk, New Relic, Hortonworks, Cloudera, and even mature companies such as SAP and IBM are capitalizing on the earliest innings of the data inundation game. While companies have always collected data, and the transaction ledger has been valuable for centuries, bits now are flooding the so-called ledger book. The advantage will lie with the real-time-ledger-book makers who can transform the torrent of data into actionable knowledge.

Actively Managed Equity

Actively Managed Equity Overview: All Strategies

Overview: All Strategies Investor Resources

Investor Resources Indexed Equity

Indexed Equity Private Equity

Private Equity Digital Assets

Digital Assets Invest In The Future Today

Invest In The Future Today

Take Advantage Of Market Inefficiencies

Take Advantage Of Market Inefficiencies

Make The World A Better Place

Make The World A Better Place

Articles

Articles Podcasts

Podcasts White Papers

White Papers Newsletters

Newsletters Videos

Videos Big Ideas 2024

Big Ideas 2024