One of the most widely debated questions in computing today is: what is the addressable market for accelerators such as GPUs (graphic processing units), FPGAs (field programmable gate arrays), and ASICs (application-specific integrated circuits)? Today accelerators are used selectively in servers to speed up workloads such as artificial intelligence (AI), search, and video transcoding. As Moore’s Law slows down and AI grows as a proportion of compute load, accelerator penetration will trend higher. The question is, how much higher?

One way to answer this question is to look at the adoption of accelerators in the high-performance computing (HPC) market. HPC operates at the high end of the server market in applications like supercomputing, oil and gas exploration, and molecular biology simulations. Long before deep learning became fashionable, HPC customers turned to accelerators to speed up algorithms used in scientific computing. Today, HPC is a leading indicator of accelerator adoption, offering insights into potential penetration and market opportunity.

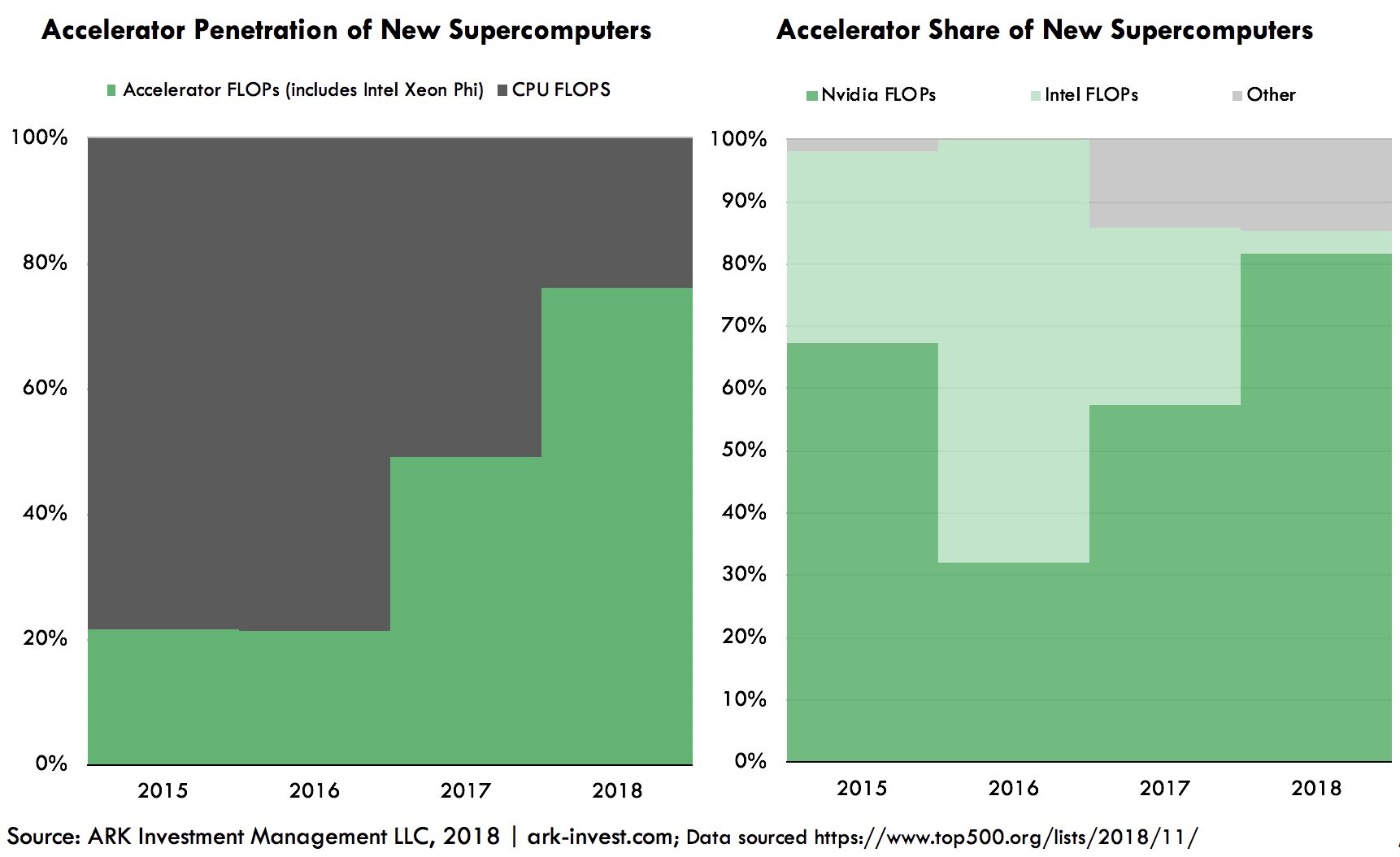

In 2010 the HPC accelerator opportunity began in earnest with the introduction of Nvidia’s Fermi GPU. Fermi enabled 64-bit floating point operations and error-correcting code memory (ECC memory), two features critical for scientific computing. Since then, GPUs have featured prominently in major facilities including the Summit supercomputer at Oakridge National Labs and the Sierra supercomputer at Lawrence Livermore National Laboratory. As a result, the share of new computing power enabled by GPUs in the world’s Top 500 supercomputers has increased from 20% in 2010 to 76% in 2018, as shown below.

While accelerators have transformed the HPC market, enterprises and hyperscale data centers still rely on conventional CPU (central processing unit) servers. That said, the trends that drove accelerator adoption in HPC—the slowdown of Moore’s Law and the growth of compute-intensive workloads such as AI—are going mainstream in data centers. If the data center market as a whole were to follow in the footsteps of HPC, accelerators could displace Intel CPUs as the workhorse of enterprise computing.

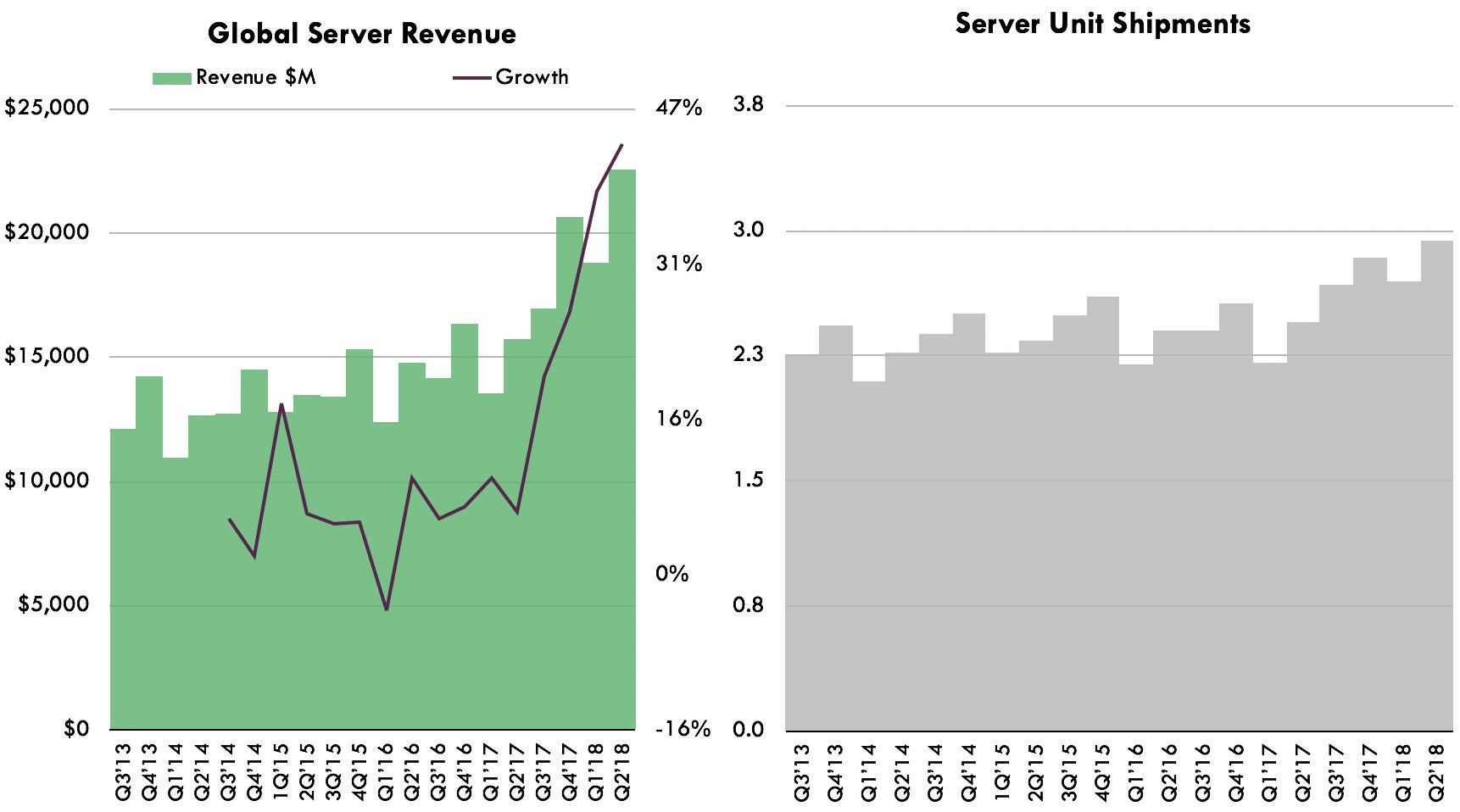

If processors were to grow to 50% of total server component costs, with accelerators capturing 70% of processors, as is the case in the HPC market today, the market for accelerators could scale from $4 billion [1] today to $24 billion. Currently, the size of the computer server market is $80 billion globally, according to IDC. If manufacturers take a 15% cut, then $68 billion accrues to component suppliers such as Intel, Micron, and Nvidia. Today we estimate that processors account for roughly 35% of server component costs, the balance allocated to memory, storage, and networking. With HPC and AI, servers are evolving toward higher compute configurations, pushing processors and accelerators to 80% of total component costs.

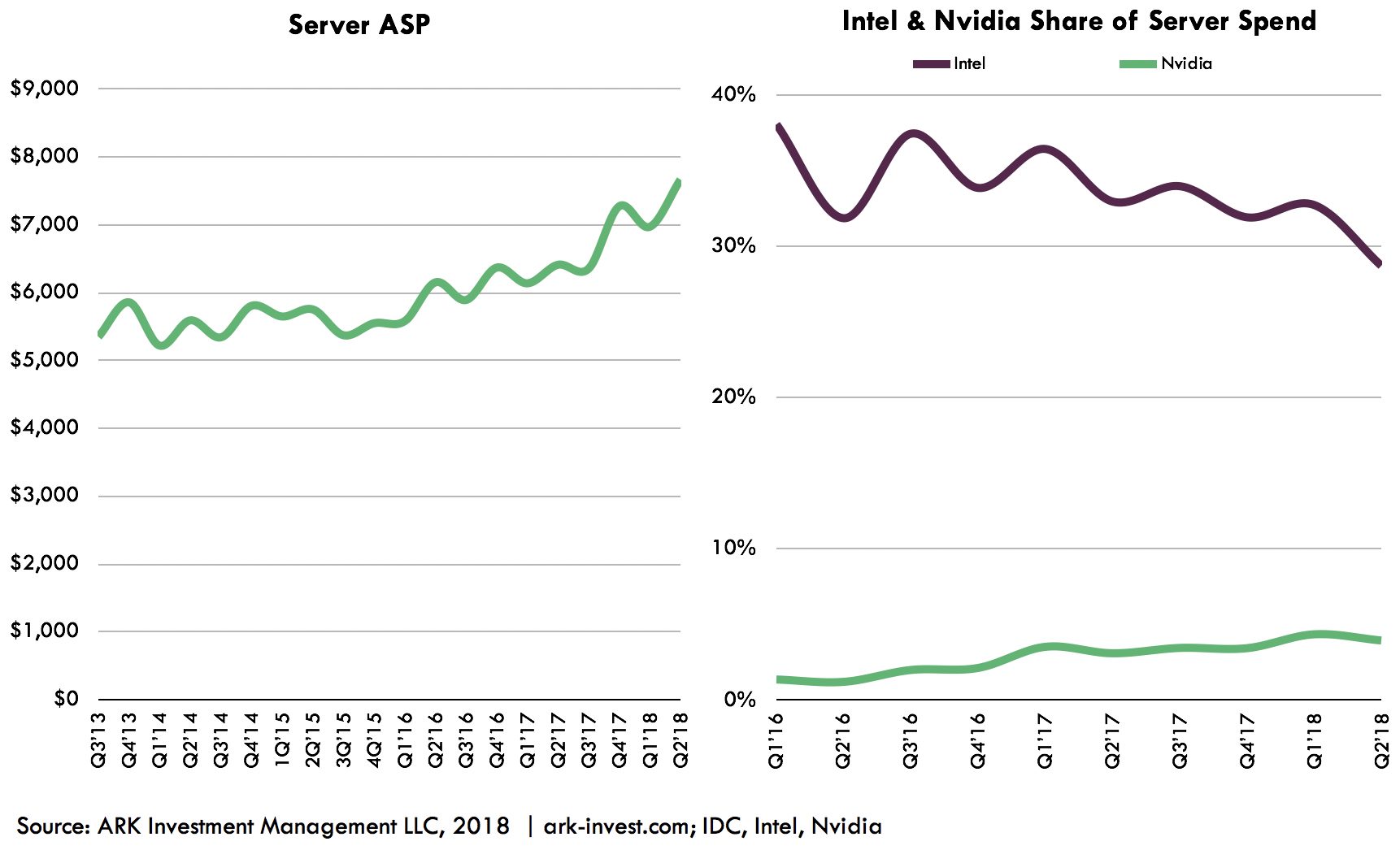

As shown by the latest IDC data above, the server market as a whole continues to grow, driven primarily by higher average selling prices (ASPs). With denser configurations, server ASPs could climb from today’s price of $8,000 to $10,000. Even at the current volume of 10 million units per year, that would grow the server market 25% from $80 to $100 billion, pushing the accelerator total addressable market to $35 billion.

Today, the accelerator market is dominated by Nvidia’s data center products, which we estimate will generate $3.2 billion of revenue in 2018. Nvidia estimates that its data center business has a total addressable market of $50 billion—a figure larger than Intel’s data center business, which seems difficult to reconcile. That said, as the latest data from IDC and Top 500 shows, the server market is undergoing a fundamental shift. The decay of Moore’s Law has increased total server spend as compute intensive workloads migrate from CPUs to specialized processors. If CPUs and accelerators were to swap places in the data center, as they did in HPC, the accelerator market would approach Nvidia’s estimate, implying a 10x increase over the next five to ten years.

Actively Managed Equity

Actively Managed Equity Overview: All Strategies

Overview: All Strategies Investor Resources

Investor Resources Indexed Equity

Indexed Equity Private Equity

Private Equity Digital Assets

Digital Assets Invest In The Future Today

Invest In The Future Today

Take Advantage Of Market Inefficiencies

Take Advantage Of Market Inefficiencies

Make The World A Better Place

Make The World A Better Place

Articles

Articles Podcasts

Podcasts White Papers

White Papers Newsletters

Newsletters Videos

Videos Big Ideas 2024

Big Ideas 2024